Security Isn't a Feature. It's the Architecture.

Every design decision in our platform starts with one question: what happens if this gets compromised? Here's how we protect your data, your strategy, and your business.

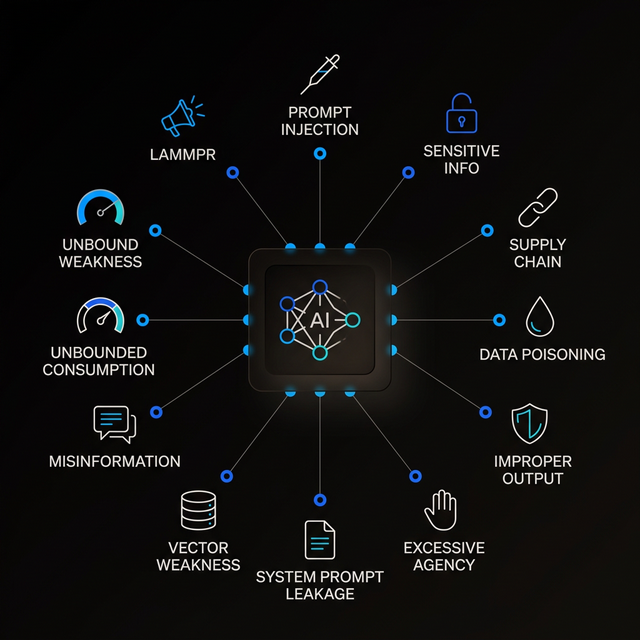

The AI Threat Landscape

Most businesses adopt AI without understanding the security risks unique to AI systems. Traditional cybersecurity protects your network — but AI introduces entirely new attack surfaces that firewalls can't see.

OWASP Top 10 for LLM Applications (2025)

-

1

Prompt Injection Attackers craft inputs that trick AI into ignoring its instructions, revealing data, or performing unauthorized actions. OWASP ranks this #1 because it exploits the fundamental way language models work.

-

2

Sensitive Information Disclosure AI can accidentally leak personal data, internal documents, or business secrets in responses.

-

3

Supply Chain Vulnerabilities Third-party models, plugins, and data sources can contain backdoors or malware.

-

4

Data and Model Poisoning Attackers manipulate training data to introduce hidden biases or triggers that activate later.

-

5

Improper Output Handling When AI outputs aren't validated before reaching other systems, attackers inject malicious content through AI responses.

-

6

Excessive Agency AI given too much autonomy can take unauthorized actions without proper human oversight.

-

7

System Prompt Leakage Attackers extract hidden instructions that define how an AI behaves, revealing security policies and workflow logic.

-

8

Vector and Embedding Weaknesses Knowledge databases (RAG systems) can be manipulated to return poisoned information.

-

9

Misinformation AI generates convincing but false information that enters business decisions without verification.

-

10

Unbounded Consumption AI without resource controls can be abused for massive costs or denial-of-service.

Source: OWASP Top 10 for LLM Applications, 2025 — genai.owasp.org

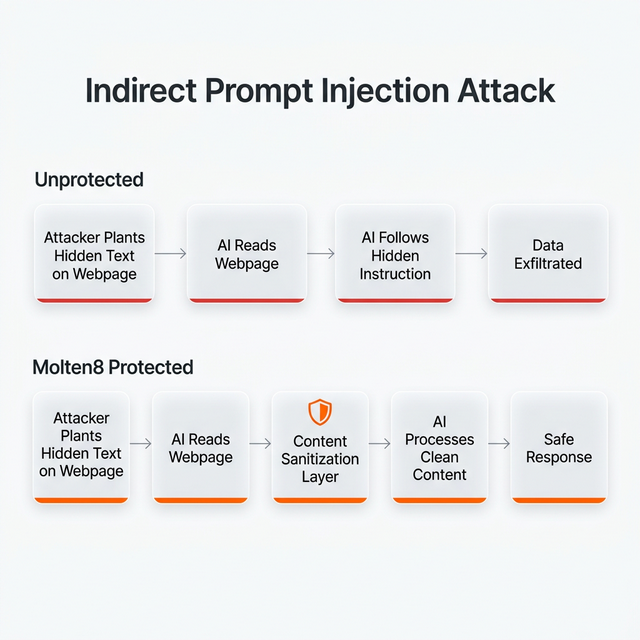

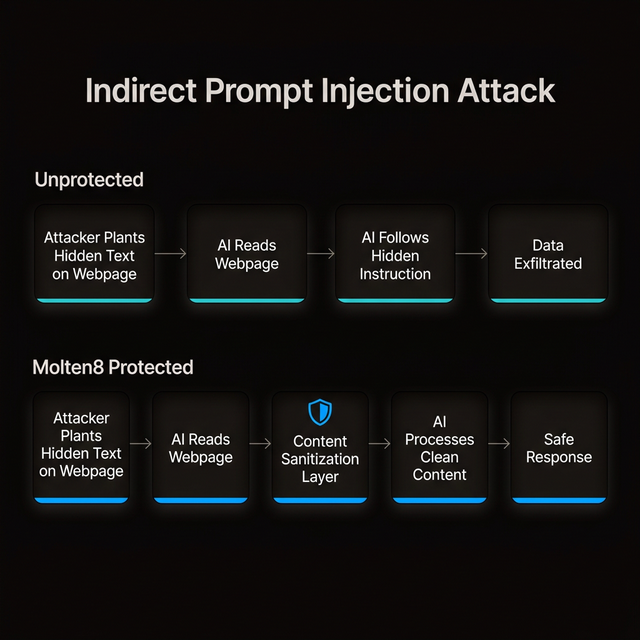

The Attack You Can't See Coming

When your AI agent browses the web, reads a document, or processes an email — that content can contain hidden instructions. These invisible commands tell the AI to change behavior, leak data, or act without authorization.

This isn't theoretical. In 2025, researchers demonstrated these attacks against ChatGPT Atlas, Perplexity Comet, GitHub Copilot Chat, GitLab Duo, Cursor, Salesforce Einstein, and Microsoft Copilot Studio.

The "Lethal Trifecta"

An AI system is vulnerable when it has ALL THREE of:

Access to private dataEmails, documents, databases

Exposure to untrusted contentWeb pages, shared docs, external emails

An exfiltration vectorExternal requests, images, API calls

Concept: Simon Willison — simonwillison.net

How Molten8 Defends

- Content isolation — External content processed in sandboxed contexts. Ingested data never treated as system commands.

- Input sanitization — All external content scanned and filtered. Known injection patterns stripped.

- Stateless AI queries — Only the question goes to the AI provider. No context, no memory, no documents travel externally. Even if injection succeeds, there's nothing to exfiltrate.

- Least-privilege agents — Each agent only accesses specific data and tools. Research agents can't access email. Document agents can't browse the web.

- Human-in-the-loop — No AI agent sends emails, publishes content, or takes irreversible actions without explicit human approval.

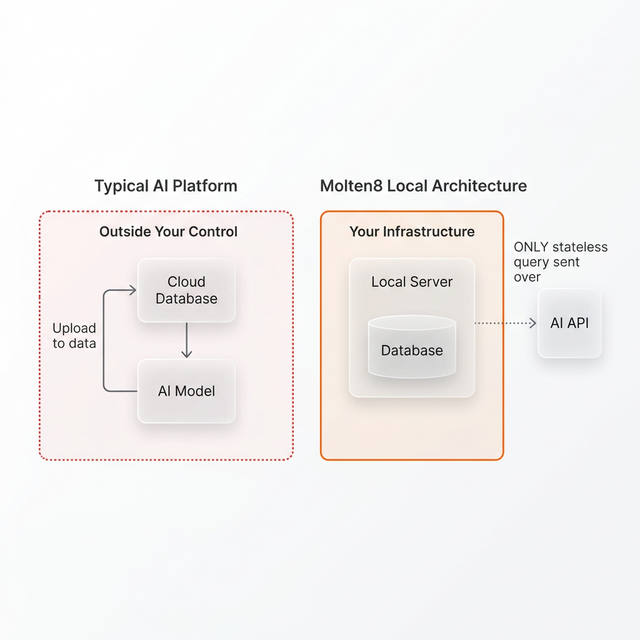

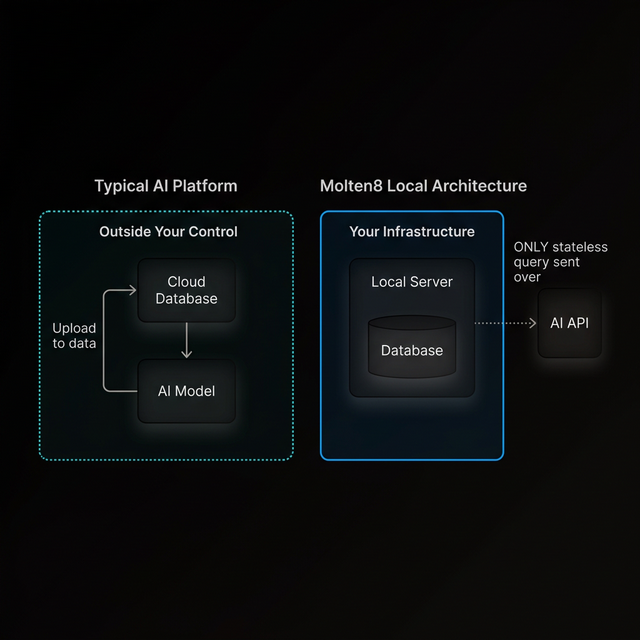

Your Data. Your Hardware. Your Control.

Most AI platforms upload your documents to provider clouds. We're fundamentally different.

Local PostgreSQL

All documents, knowledge bases, conversation history, and AI memory stored on YOUR infrastructure. Not on OpenAI's servers. Not on any cloud.

Local RAG

Context retrieval happens from your local vector database. Knowledge never leaves your network.

Stateless API Calls

We send ONLY the question to the AI model. They process it and return an answer. No storage, no logging, no training. Ephemeral.

Docker Container Isolation

Every service in its own isolated container. Database unreachable from the web. Blast radius contained.

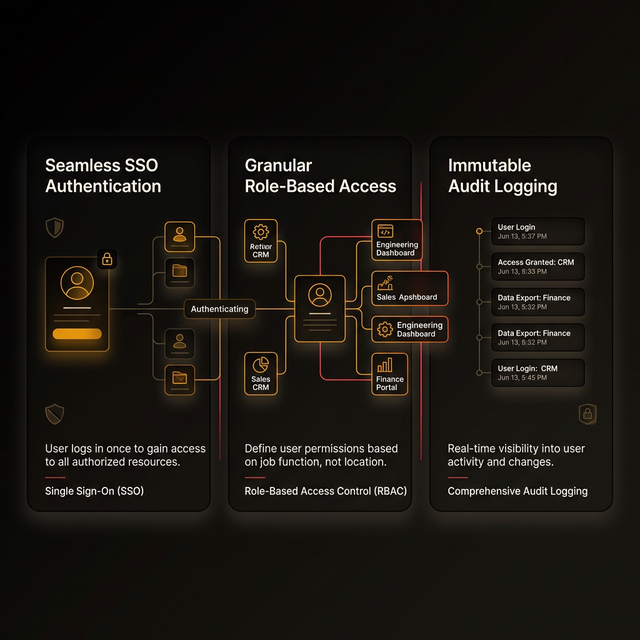

Enterprise Identity, Not Another Password

SSO with Microsoft and Google

Native OAuth 2.0 / OIDC. Your team uses existing corporate credentials. No new passwords to manage.

Centralized Access Control

Joins and leaves follow identity provider status automatically. Offboard a user in AD, they're gone from Molten8 instantly.

Role-Based Permissions

Administrators, analysts, operators see only what they need. Principle of least privilege applied at every layer.

Audit Logging

Every authentication event, agent interaction, and data access logged. Full traceability for compliance and incident response.

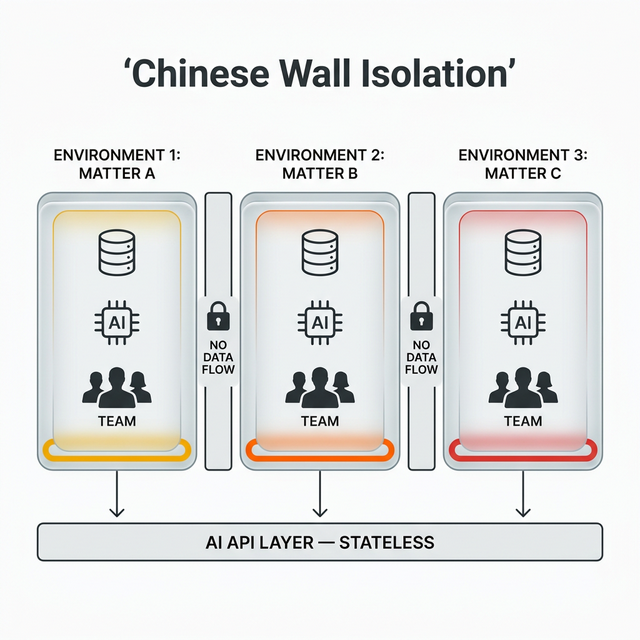

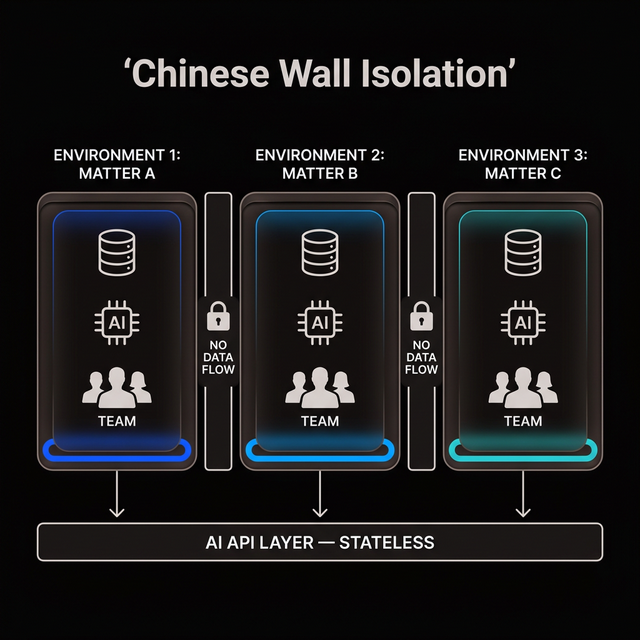

Absolute Isolation Between Projects

Database-Level Separation

Each project runs in its own isolated schema. Not permissions — architectural separation. No query connects one environment to another.

Per-Tenant Agent Instances

AI in Environment A has zero knowledge of Environment B. Different memory, documents, history.

Cross-Contamination Prevention

Even admins access one environment at a time. No "view all" spans boundaries.

Compliance-Ready

Satisfies Chinese Wall requirements for law firms, healthcare providers, and financial services firms.

We Don't Train On Your Data. Nobody Does.

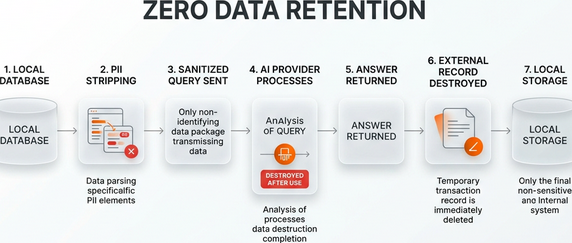

- Zero Data Retention (ZDR) — Enterprise API subscriptions enforce ZDR. Prompts not stored, not logged, not used for training.

- PII stripping — Personal information detected and removed before any query reaches external AI.

- Ephemeral processing — Provider processes query and discards it. No conversation history on their side.

- No per-seat surveillance — Platform logs are YOUR logs on YOUR infrastructure. We don't monitor your usage for our benefit.

Pay for What You Use. Nothing More.

- API pricing eliminates vendor lock-in — Switch models or providers without contractual friction.

- No seat-based credential sharing — Per-seat pricing incentivizes shared logins, destroying audit trails. API model means every user gets their own access at zero base cost.

- Cost visibility — See exactly what each AI capability costs. No opaque bundled pricing.

- Unbounded consumption protection — Rate limits, budget caps, and usage alerts prevent runaway costs.

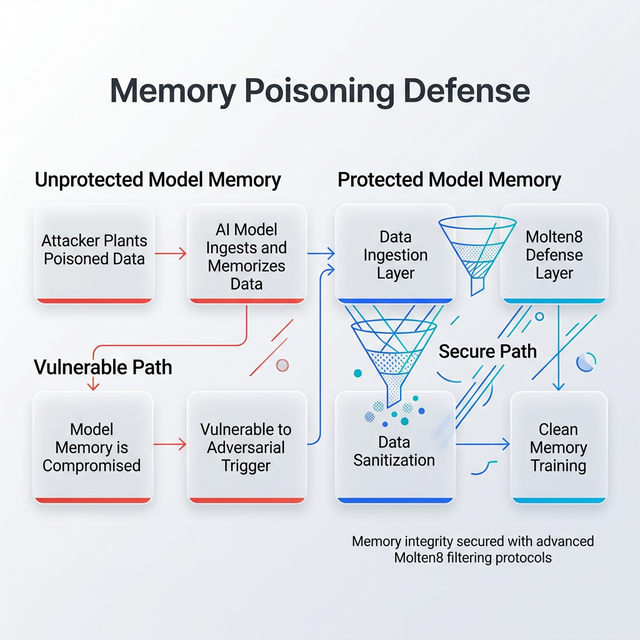

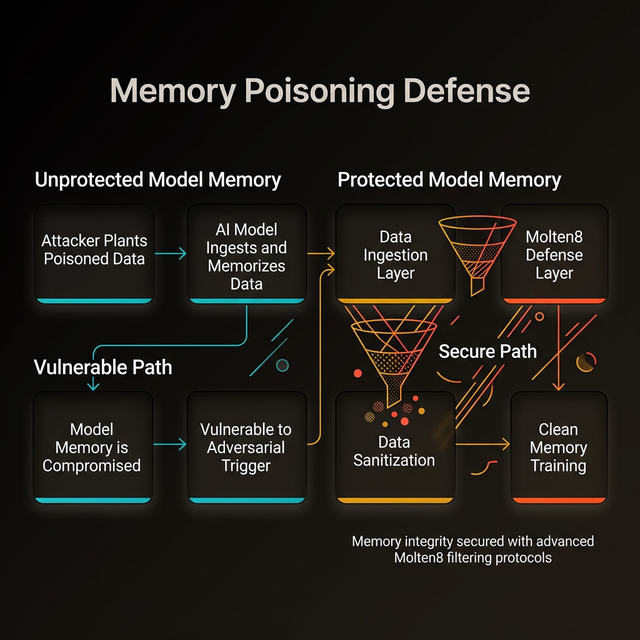

Your AI Can't Be Tricked Into Remembering Lies

Memory poisoning (2025-2026 threat): attackers plant false information in AI long-term storage. The AI "learns" malicious instructions and recalls them days or weeks later — the "sleeper agent" scenario.

How We Defend

- Local memory under your control — All AI memory in your PostgreSQL. Inspectable, auditable, purgeable.

- Memory validation layers — New information verified before entering knowledge base.

- No autonomous memory updates — AI agents can't modify their own long-term memory without controlled ingestion pipelines.

- Periodic memory audits — Scheduled reviews ensure no poisoned data accumulates.

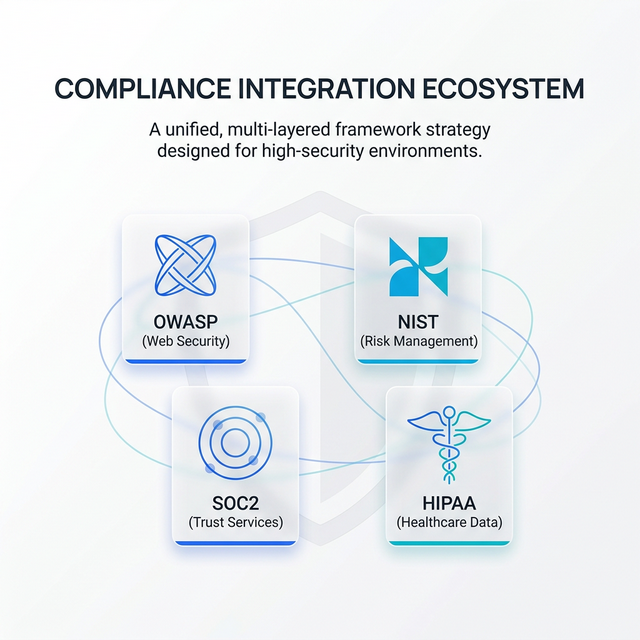

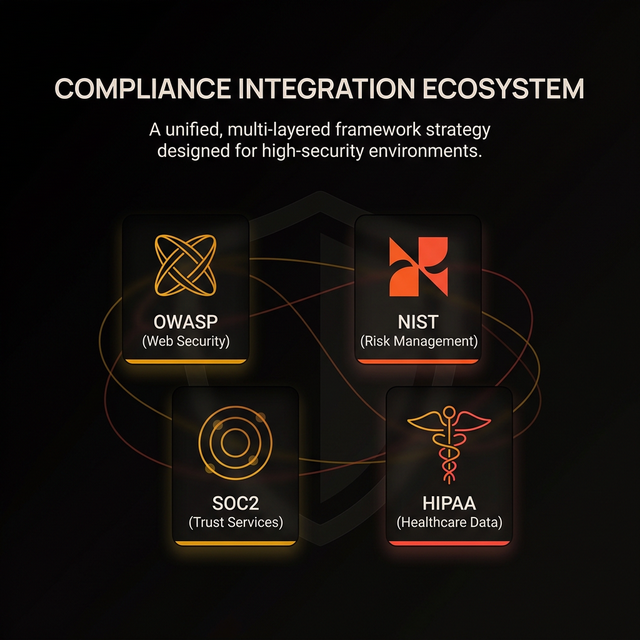

Built Against Real Frameworks

Our architecture is aligned with and built against these frameworks. We design to their standards and continuously measure against their controls.

Security Resources

The frameworks, research, and reports that inform our security architecture.

OWASP Top 10 for LLM Applications 2025

The definitive list of AI-specific security risks. Every control in our platform maps to this framework.

Read on genai.owasp.org ReportCisco State of AI Security 2026

Enterprise AI security trends, attack patterns, and defense strategies from Cisco's research team.

Read on blogs.cisco.com ResearchOpenAI: Hardening Atlas Against Prompt Injection

How OpenAI addresses indirect prompt injection in their web-browsing agent — and why additional layers matter.

Read on openai.com FrameworkNIST AI Risk Management Framework

Federal guidance for AI risk identification, assessment, and mitigation. The foundation for responsible AI deployment.

Read on nist.govQuestions About Our Security?

We're happy to walk through our architecture, answer technical questions, or discuss how our approach fits your compliance requirements.

Book a Consultation